I have been quite bored lately. When you are bored you want to make things. One thing I have always wanted to do is programmatically take a photo (that means to have my code use the built-in camera to take photos and use in my application) using my Mac’s built in iSight-camera for some fun manipulation.

A simple search on Google returns some outdated results on old programs and other stuff related to capturing video using old APIs. However, when I recently dove into QTKit (QuickTime’s API) I found some promising things.

This post will be a straightforward demo of how to grab photos from your iSight camera (or any other connected cameras). I’ll post some code and explain the different steps and what they mean. And… The best parts about reading tutorials on QTKit and using the computers camera are that you get to see a lot of random pictures by the demoer himself. And here is my contribution: I.e, what you should have at the end of this tutorial.

Doing this you notice how silly you look while compiling code.

Prerequisites

You should know some Objective-C, how to use XCode and have some basic knowledge of Cocoa (or Cocoa touch, for iOS, but this code will not work on iOS)

Code

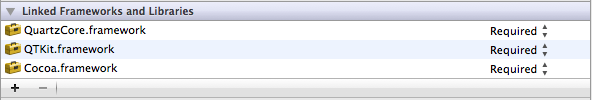

The first thing you have to do is include the QTKit-framework in your Xcode-project. While your at it you’ll need the QuartzCore-framework too (for image processing). Adding frameworks in Xcode 4 is a bit different from 3. You press the project name in the file navigator and there you get a list of frameworks for your project. Adding is simply pressing the +-sign and finding your framework.

To keep this simple I’ll just post a working code sample with lots and lots of comments.

This class presents you with an easy interface for grabbing photos. As illustrated:

Thanks so much for an extremely useful bit of code!

Thanks for this piece of code. It saved me a lot of stumbling and aggravation as I was trying to figure this out on my own.

However, when I test this in a small application, I see that something is getting allocated in memory that is not being released. I’ve tried to figure out what is happening and where, but just can’t seem to get it.

I’m not sure if it makes a difference when using garbage collection.

Any ideas?

Thanks again.

Any ideas on how to measure the ambient light using your isight camera?

Thank you Erik – very good of you to publish a complete class. Are you happy for people to use this in commercial projects (with credit of course).

Dat – I have tried what you have asked. By grabbing an image from the camera, reducing it to a single pixel and then measuring the brightness of that pixel. It works to a point, but the problem is that the camera adjusts its exposure for the light conditions. So you can measure very light and very dark but it’s very flat in between. Much better to access the computer’s ambient light sensor (Google IOServiceGetMatchingService and AppleLMUController). This works really well but I believe the calibration is different on different models of computer.

What if i want to do this for iOS?

I have problem running this code with OS x 10.9

i have compiled it using arc flags but the photo grabbed delegate is not getting called . and the delegate didOutputVideoFrame is also not getting called

Thanks

-Sadiq

Is it possible to use my iphone as camera? instead of the default camera of mac? like quicktime able to mirror view the phone?

this is very useful code,

i want to use it in my application,

please explain me how can i use it in my Nswindowcontroller class and which type of control i use to display the camera from where i capture an image.

Go ahead!